Why 75%+ of Enterprises Admit They Can’t Secure Their Non-Human Identities

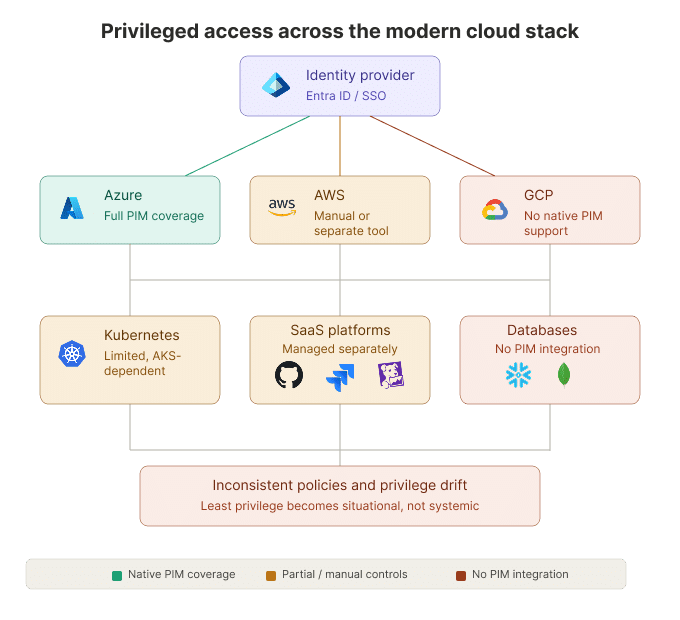

Security teams are losing the battle to secure non-human identities (NHIs) for one simple reason: machine identities are now created inside the systems that ship software. They appear in CI/CD pipelines, Kubernetes workloads, SaaS integrations, and AI-driven workflows faster than central IAM teams can inventory or review them.

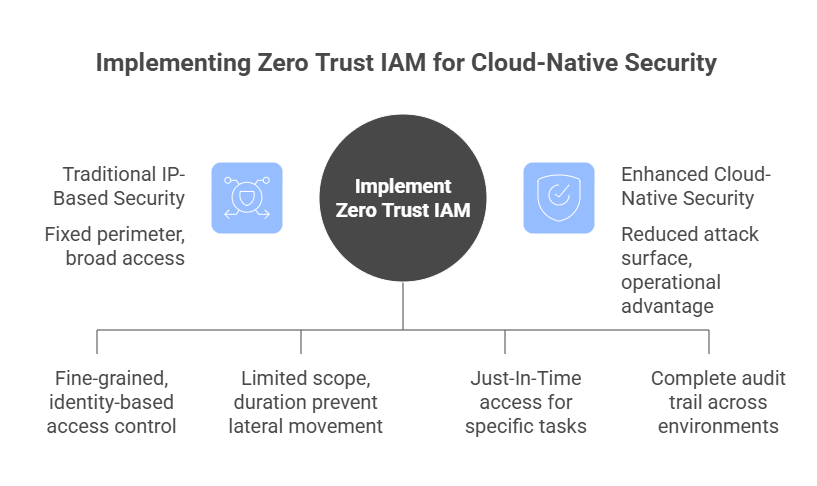

The problem is not just visibility. It’s that many of these identities keep more access than they need, for longer than they need it. In cloud-native environments, that turns over-scoped service accounts, CI roles, and automation tokens into persistent runtime risk. Securing non-human identities requires a shift from static governance to continuously discovered, just-enough access, with time-bound permissions enforced at the moment of execution.

How Non-Human Identities Escaped Centralized Control

Most discussions of NHIs focus on growth. But the bigger issue is that cloud-native systems have made identity creation part of delivery.

Kubernetes introduced service accounts tied to workloads. CI/CD pipelines began assuming IAM roles automatically. Infrastructure-as-code embedded identity creation directly into deployment logic. SaaS platforms normalized OAuth integrations and API tokens for everything.

Still, identity creation was visible enough that teams could at least somewhat track it. AI has made that visibility harder to maintain. In copilots, agents, inference endpoints, and event-driven workflows, identity is now generated rapidly as part of execution.

So, the other half of the problem is velocity. In modern environments, machine identities are:

- Created programmatically

- Federated dynamically (OIDC, STS, workload identity federation)

- Scoped broadly under delivery pressure

- Rotated frequently but rarely re-evaluated

- Embedded in pipelines that developers control directly

Identity issuance has moved from centralized IAM teams into codebases, pipelines, and autonomous systems that developers iterate on daily. In that shift, identity stopped being a centrally managed admin object and became a runtime artifact generated inside delivery systems. That matters because security teams are no longer just dealing with identity growth; they’re dealing with privileged access they may not discover until it becomes one of the biggest risks of NHIs.

The New Breach Path Runs Through Machine Identity

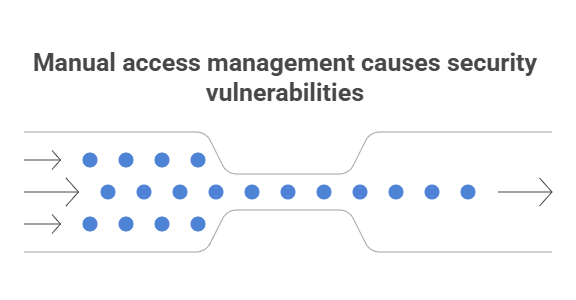

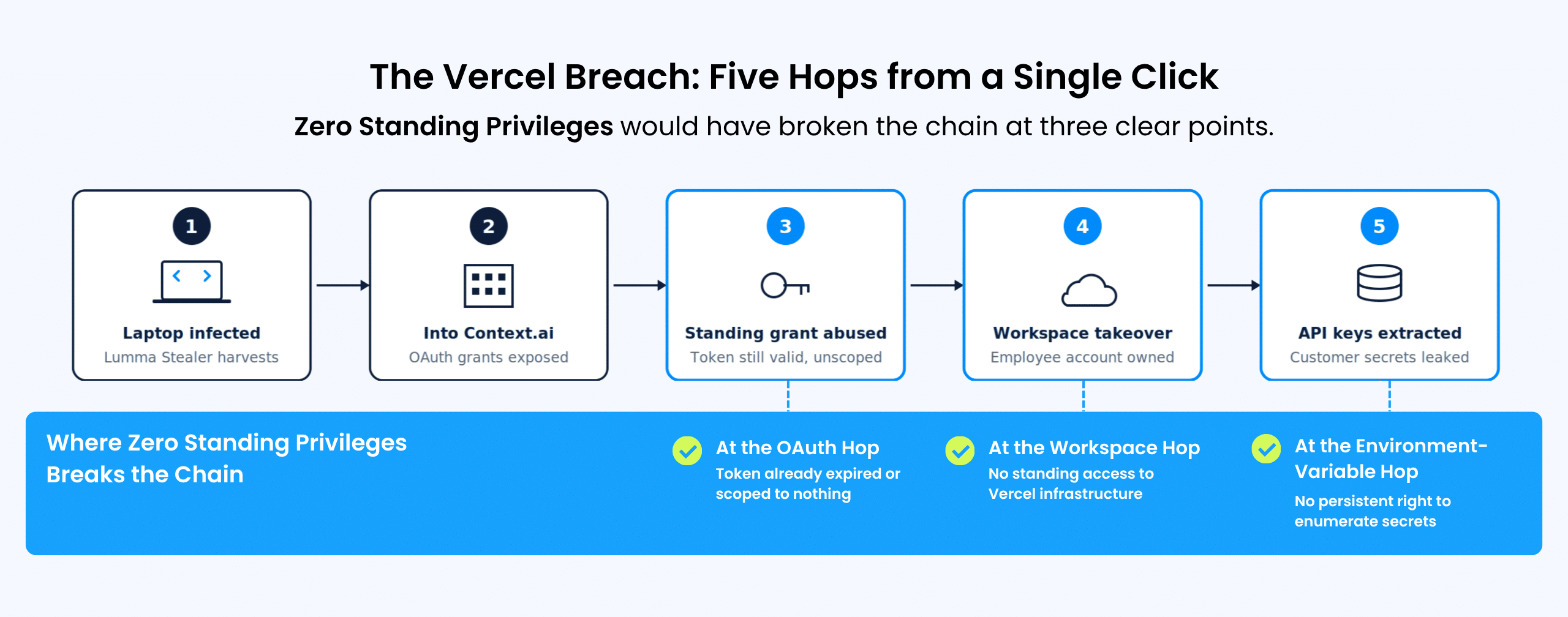

A few years back, when machine identities were relatively stable, compromise required persistence. An attacker needed long-lived credentials, privileged access that went unnoticed, or lateral movement through misconfigured infrastructure.

The explosion of automation means credentials are continuously minted, exercised, and rotated. That’s the shift many enterprises are only beginning to internalize: the operational center of gravity inside enterprises has shifted toward automation, and attackers have adapted accordingly.

Modern attackers focus on what that identity can do at runtime and how quickly it can act. The more efficient path is to compromise the automation layer itself, the CI/CD pipeline, the workload identity, the federated trust relationship, and inherit the permissions that were intentionally granted to keep systems running smoothly.

Identity-based attacks often look indistinguishable from routine automation tasks. When an over-permissioned machine identity accesses object storage or mutates infrastructure via the cloud control plane, traditional user-centric signals may be absent or much weaker, since the activity is executed by a legitimate machine principal with valid credentials.

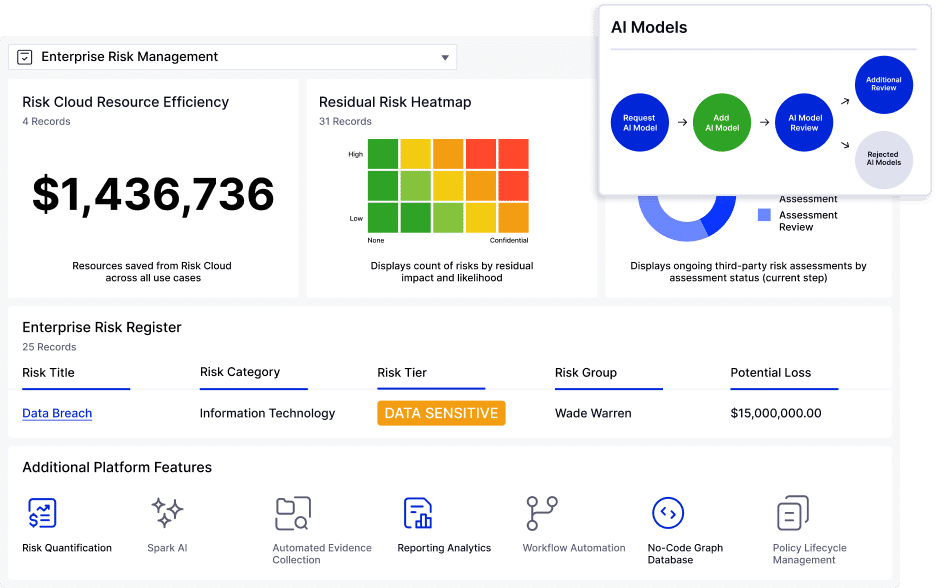

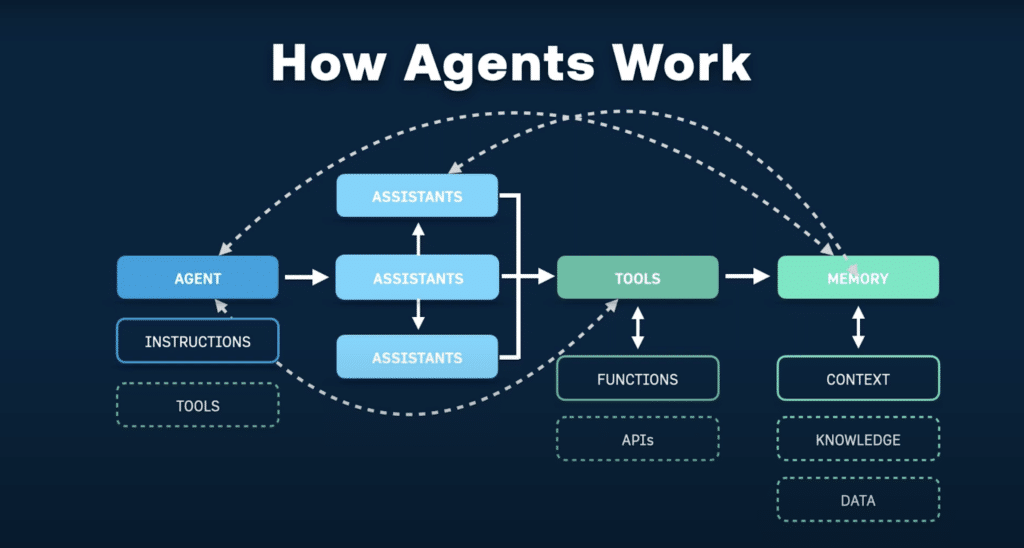

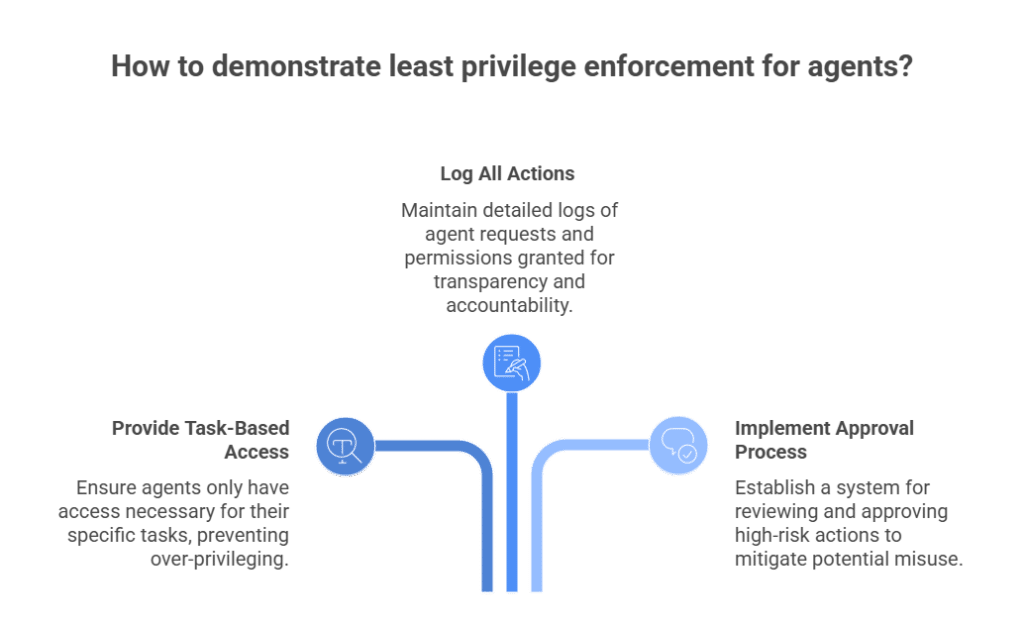

The problem deepens with AI agents. As agentic AI security becomes a more urgent concern, enterprises increasingly grant these systems delegated authority across multiple domains, from data retrieval and ticketing to infrastructure remediation and SaaS orchestration. The risk is that they are often over-privileged before the task begins, hard to inventory, difficult to audit, and capable of taking disruptive actions without the contextual guardrails or human authorization those actions may require.

Consider a common CI/CD path. A CI workflow exchanges an OIDC token for a cloud role so it can deploy infrastructure. That role was originally granted broad write access because teams saw tighter controls as an engineering risk to release velocity. If a malicious dependency or compromised pipeline step executes inside that workflow, the attacker doesn’t need to steal a human admin account. They inherit a valid machine identity with legitimate permissions. From the cloud provider’s perspective, the requests may look routine: a known principal making authorized API calls during a deployment window.

What It Takes to Secure Non-Human Identities

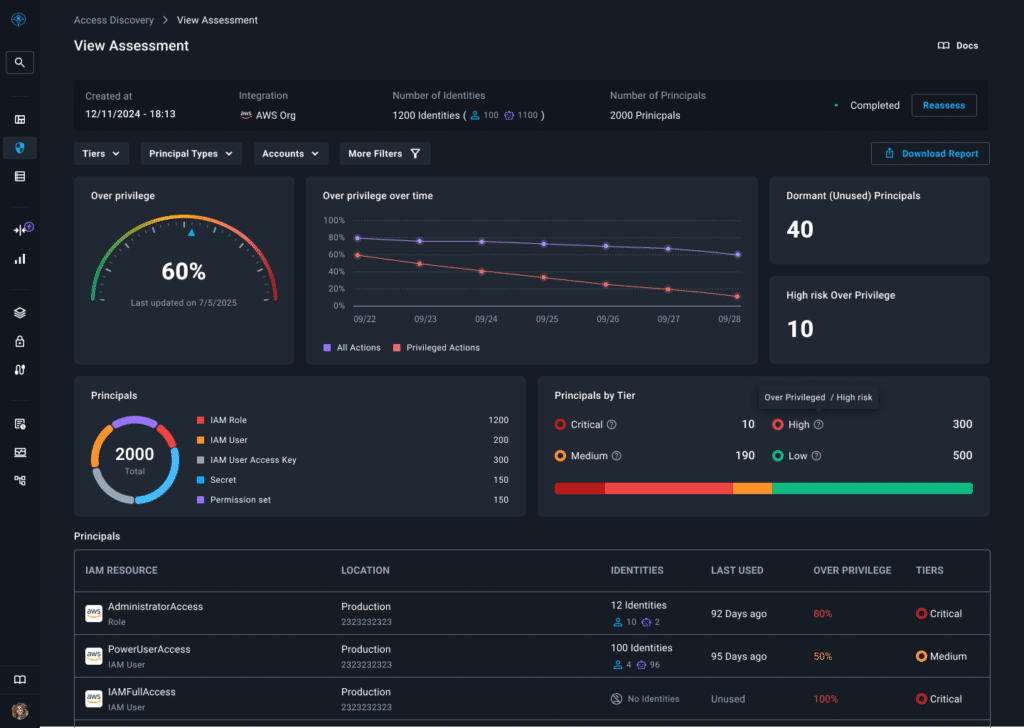

Access Discovery Is the Starting Point for NHI Risk Reduction

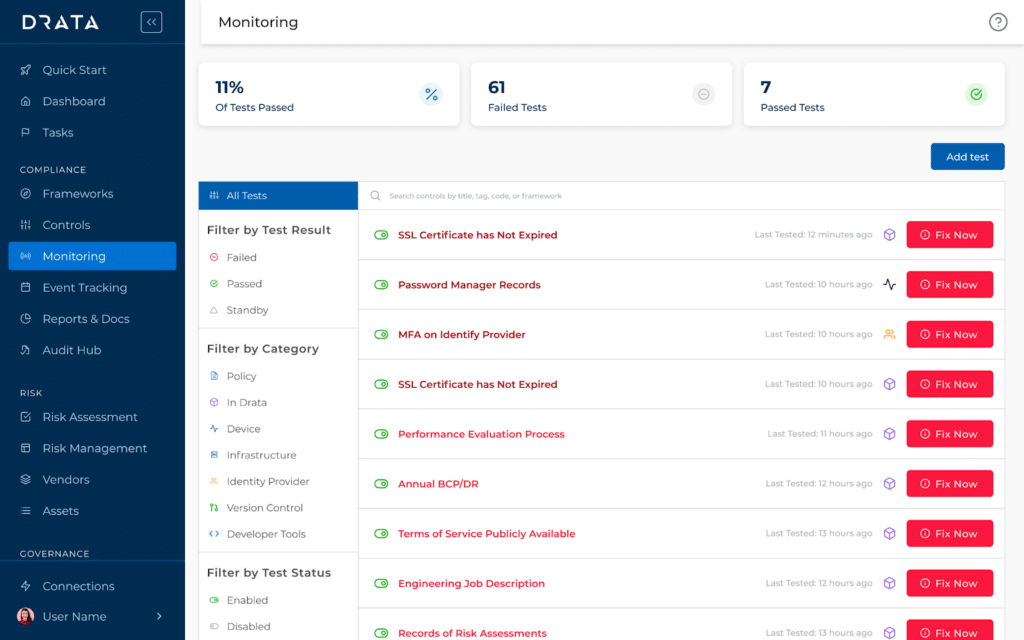

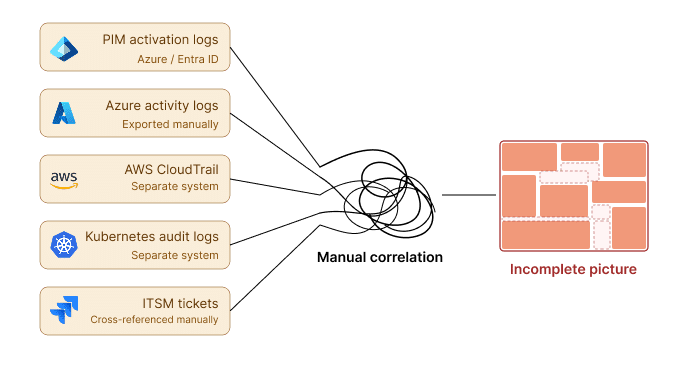

Continuous discovery helps solve the fundamental mismatch between automated identity creation and manual oversight. If service accounts, federated trust relationships, CI-issued principals, and OAuth scopes are being created as side effects of deployment, governance cannot rely on someone remembering to document them. Discovery has to be automatic, continuous, and tied to remediation.

Just Enough Access Has to Be the Default

For non-human identities, just-enough access has to be the default. Under delivery pressure, it’s sometimes tempting to grant broad scopes to NHIs to make things work. Service accounts receive project-level access, CI roles inherit write permissions across environments, and OAuth tokens are granted full API scope because fine-grained scoping is time-consuming. Right-sizing access to the task is the most direct way to reduce blast radius without slowing delivery.

Over time, those permissions are rarely revisited. If a machine identity only needs read access to one dataset during one workflow stage, granting it cross-environment write access creates unnecessary exposure. Just enough access (JEP) aligns permissions to the task, so if that identity is compromised, the blast radius is smaller by design.

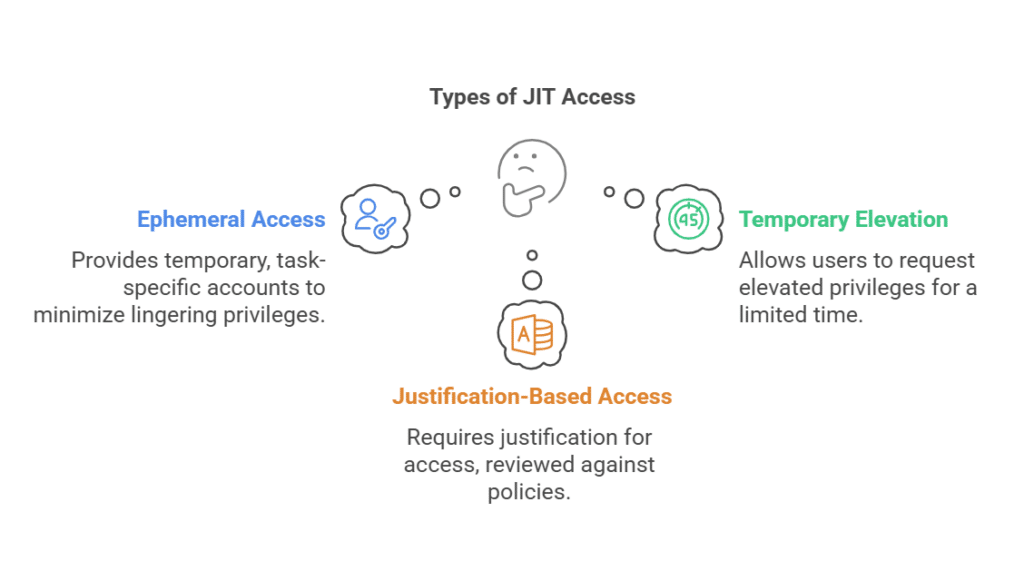

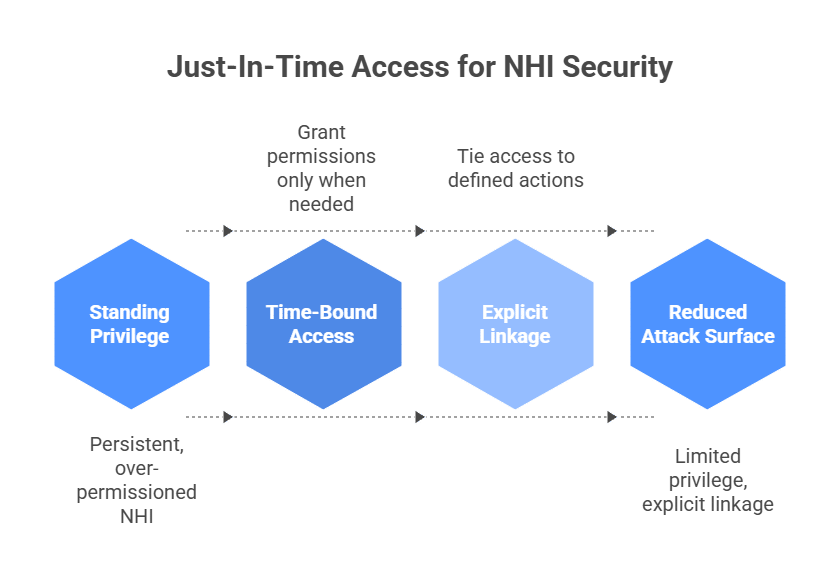

Time-Bound Access Reduces Standing Privilege

Even when access is right-sized, duration still matters because standing privilege increases exposure without improving IT service continuity. Non-human identities tend to persist, and in many environments so do their privileges. Time-bound access reduces that standing privilege by ensuring elevated permissions only exist for the window in which a workflow or remediation task actually needs them.

An over-permissioned service account might be used hundreds or thousands of times per hour, for example. Standing privilege in machine-heavy environments doesn’t sit idle waiting for abuse. It is exercised constantly.

That means for any compromise, whether a leaked CI token, a container escape, or a poisoned dependency, the privilege is often already there. Instead of granting persistent write access to a deployment role “just in case,” JIT enforces that access only exists during the execution window that requires it.

First, it shrinks the attacker’s inheritance window. If a machine identity is compromised outside its active task window, there is no privilege to exploit. Second, it forces explicit linkage between identity and intent. Access becomes an event tied to a defined action.

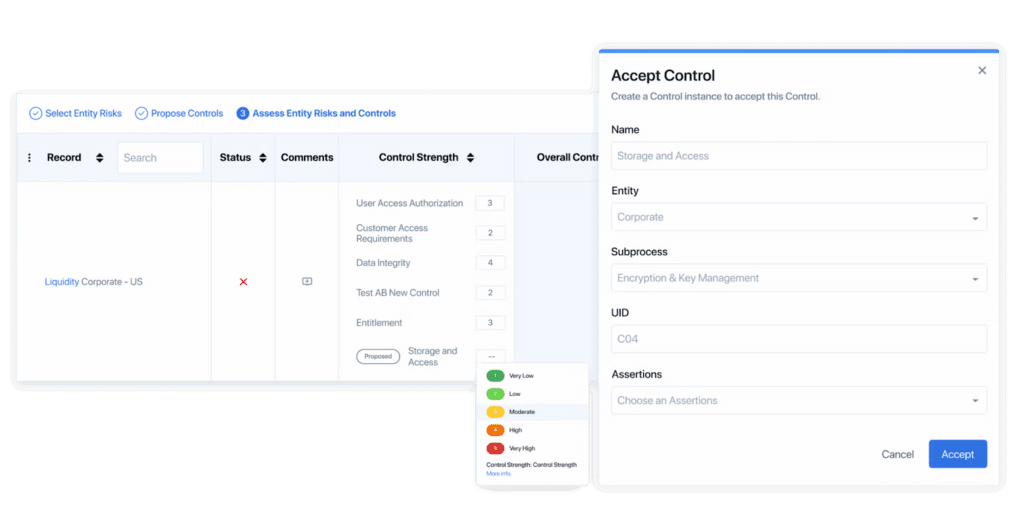

Contextual Authorization Closes the Runtime Gap

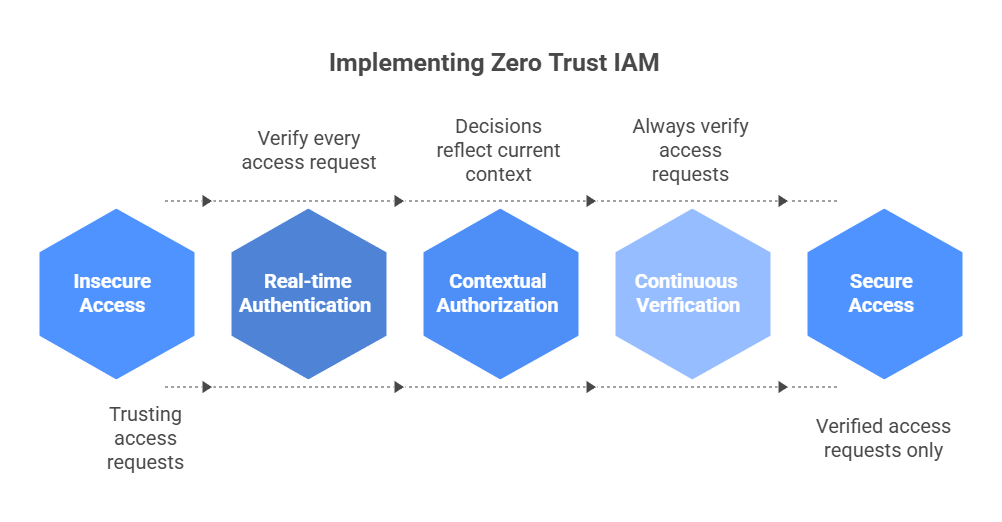

Traditional IAM answers: “Is this identity authenticated and assigned this role?” Modern threats exploit what that role can do in context.

Contextual authorization asks a harder question: “Should this identity perform this action in this environment at this moment?”

If an AI agent normally reads data but suddenly attempts destructive infrastructure changes, the system should evaluate that shift in behavior. If a machine identity operating in staging suddenly accesses production datasets, that context matters.

Most enterprises cannot answer contextual questions at runtime, even as AI-driven attacks make provisioning-time controls less effective. That’s another blind spot attackers often exploit in machine-led attacks.

Contextual authorization moves enforcement to the moment of execution, which is where modern identity-based attacks actually occur.

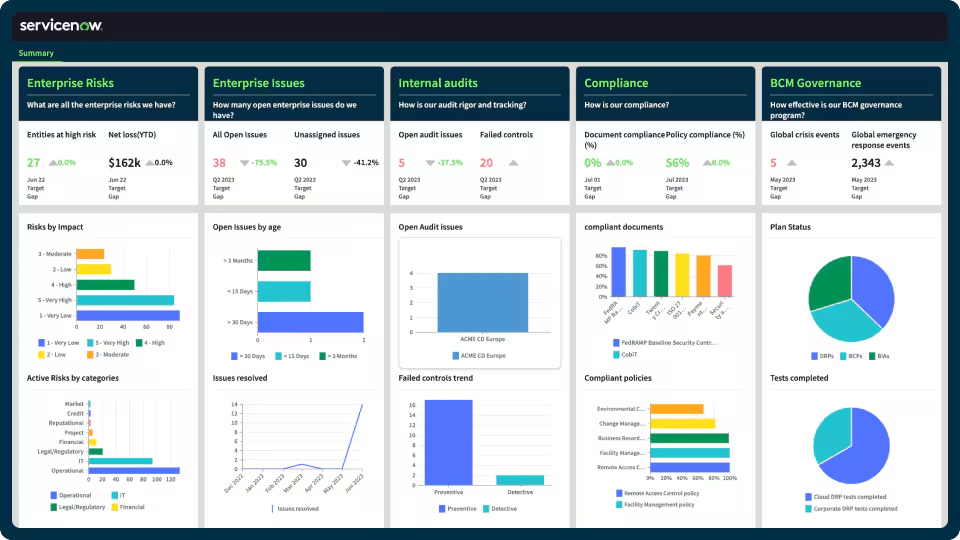

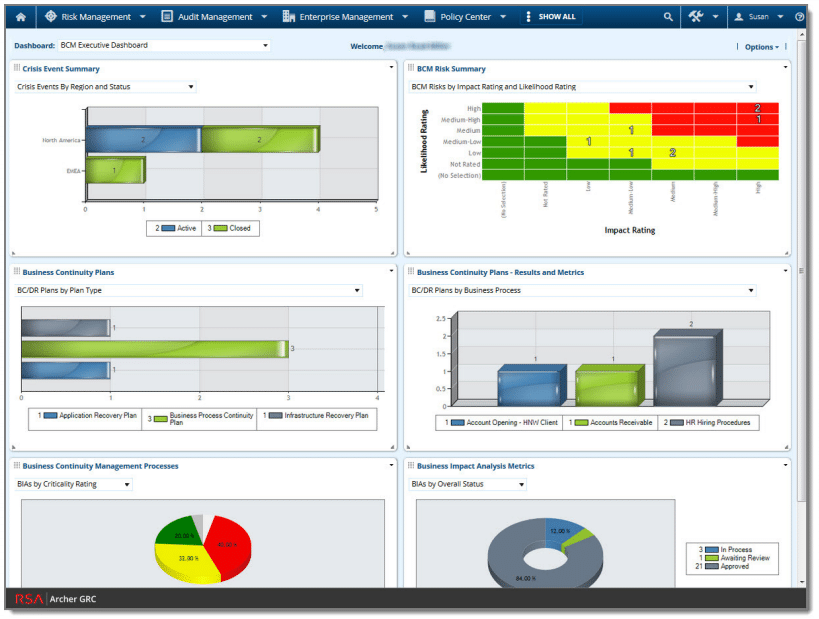

Enterprises Need Runtime Identity Security, Not Just Governance

Most enterprise IAM programs were designed around governing human lifecycle events: joiner, mover, and leaver. In this framework, access is tied to job function, roles are mapped to departments, and reviews happen periodically. Machine identities don’t neatly follow lifecycle events, though.

A workload identity might exist because a deployment template defines it. An API key exists because a developer needed integration to work.

These identities don’t “leave the company.” They linger in config files, Terraform modules, Helm charts, GitHub secrets, and CI workflows. And because they aren’t people, they often don’t trigger the same governance instinct. Identity governance built around HR metaphors simply doesn’t map to automation-driven systems.

Going forward, NHI security needs to move away from the people-centric model. Instead of asking, “Does this role have appropriate permissions?” the question becomes, “Should this identity perform this action in this environment at this moment?”

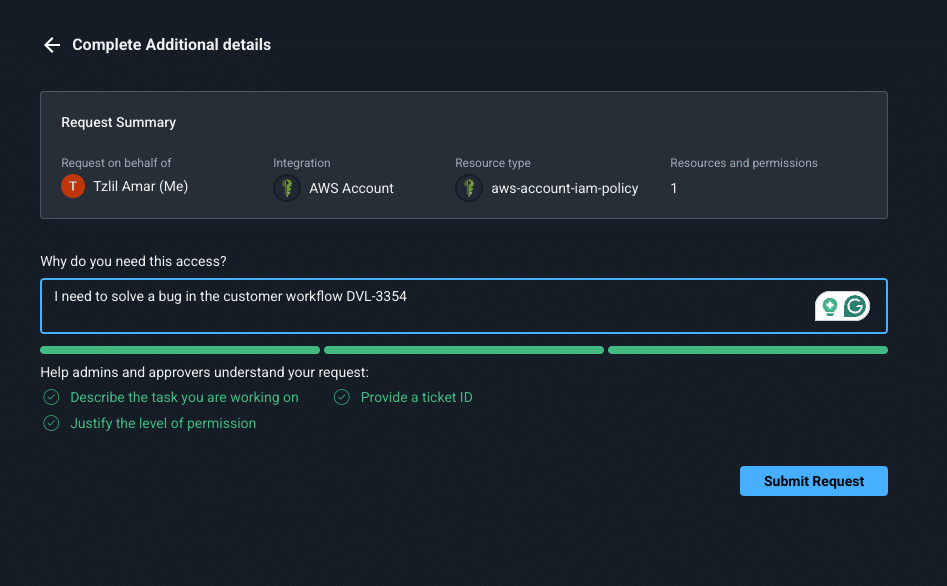

That shift only works if it fits the way engineering teams actually operate. Access has to be requestable, enforceable, and auditable inside the tools developers already use, whether that’s Slack, Teams, CLI, CI/CD workflows, or cloud platforms. Otherwise, teams fall back to tickets, delays, and the same standing permissions they were trying to eliminate.

Enforcement must occur where the action happens: at the API and endpoint level, not just in static role assignment. Permissions have to be dynamic, contextual, and tied to the requested action, environment, and approval logic in that moment. Until identity security operates at the same speed as automation, machine-led systems will keep outpacing human-era controls.

What Enterprises Must Change to Secure NHIs

Securing non-human identities doesn’t get easier with another layer of static IAM policy. Machine identities are created and exercised at the speed of automation, so the controls around them have to operate at that same speed. That means continuous visibility into where NHIs exist, just-enough permissions scoped to the task, and access that expires when the work is done, not long after the risk has already been introduced.

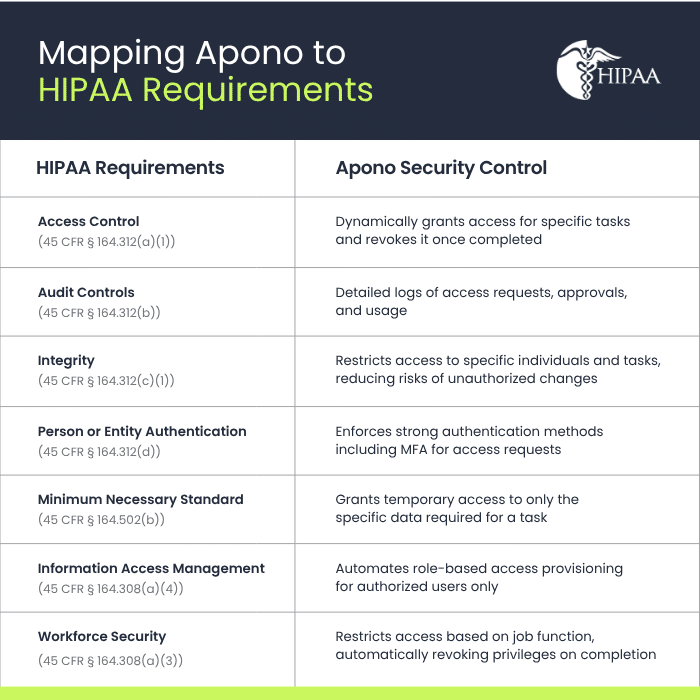

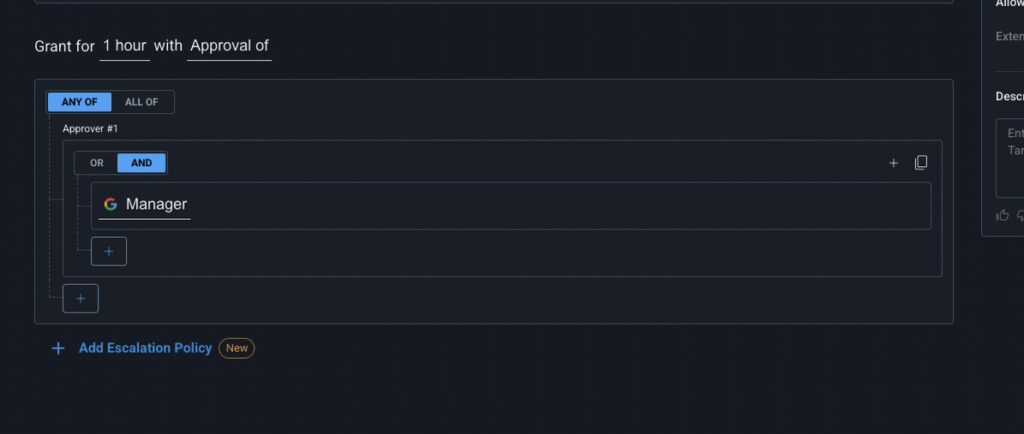

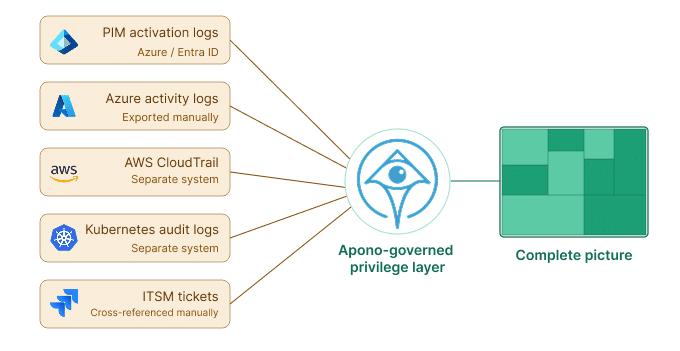

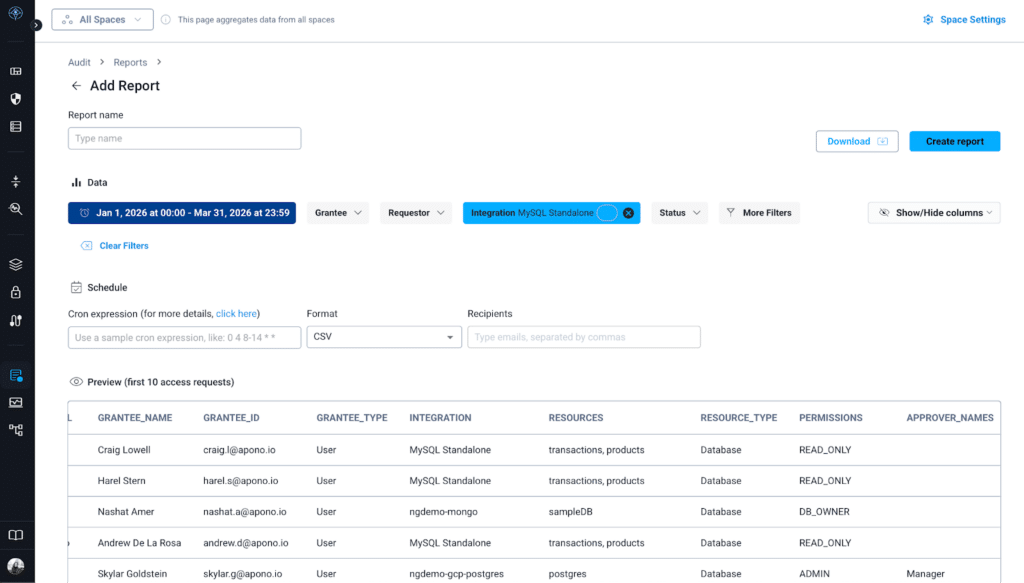

That’s the shift Apono is built to support: cloud-native access automation that removes standing privilege without slowing developers down. Teams can discover risky access, right-size permissions, grant time-bound access through Slack, Teams, or CLI, and enforce approvals and auditability without falling back to tickets or static roles. With auto-expiring permissions, break-glass and on-call workflows, comprehensive audit logs, and API-driven enforcement across the stack, the model fits modern machine identity risk far better than manual governance ever could.

As non-human and AI-driven workloads continue to multiply, the winning approach is access automation that enforces least privilege in real time. Download the PAM Buyer Guide for modern cloud environments to understand which access models actually work across human, non-human, and AI-driven workloads. Alternatively, book a demo if you’re ready to see how Apono works in practice.